Contact

Center for Asian Studies CASE Building, Suite E330

366 UCB

Boulder, CO 80309-0366

China_Made@colorado.edu

Partners

![]()

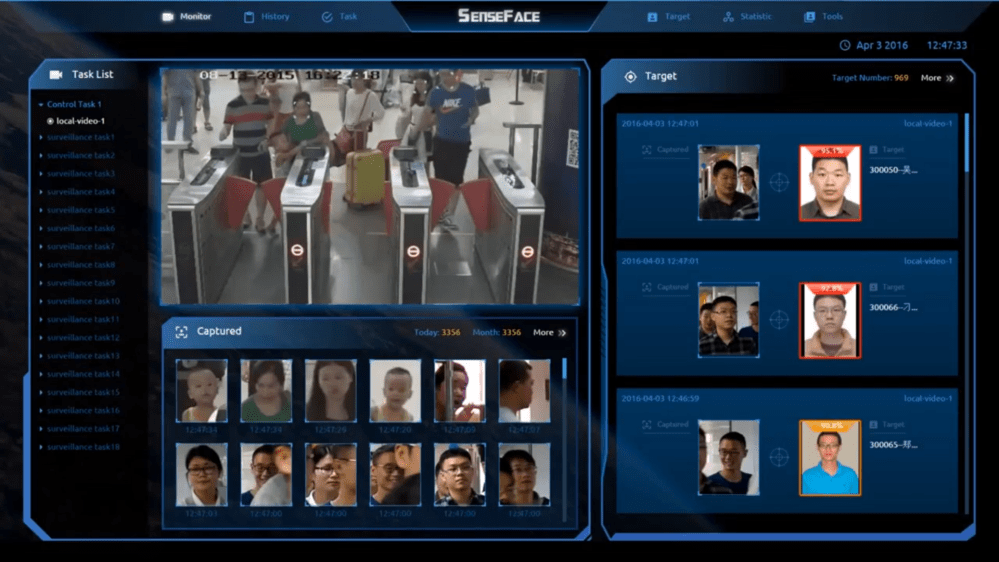

This research focuses on the development of “safe city” surveillance infrastructure systems in Kuala Lumpur, Malaysia. These projects are built by firms involved in the surveillance of Uyghurs and other Muslims in Northwest China that have been ascribed protected “AI Champion” status by Chinese state authorities. They include web of face recognition-enabled surveillance cameras, command centers, data storage centers, data interface platforms, portable data assessment tools and rapid response capacity building. Research will assess how this surveillance infrastructure is used in Kuala Lumpur to affect the lives of thousands of undocumented immigrants and refugees, as well as citizens. It will also consider how this infrastructure project demonstrates the leading edge of the Chinese model of international technology development, a key focus of the overall China Made research initiative.

In Kuala Lumpur, Chinese-built face-recognition enabled camera systems have the potential to drastically reduce the autonomy of undocumented immigrants and asylum seekers from South Asia, Myanmar, China and elsewhere. As a leading recipient of Chinese international technopolitical development, Malaysia presents a particularly interesting case study of the Chinese model of development in an Asian democracy. In some countries such as Zimbabwe and Ecuador, Chinese face-recognition enabled surveillance systems are being adopted at the scale of the nation by powerful regimes. In other states, such as Malaysia, the relationship between Chinese technology and politics is more uneven. For instance, there are deep divisions among different populations of Chinese Malaysians, who make up one-third of the total population, which fosters resistance to new infrastructure development among some who see it as Chinese state overreach into Malaysian society—even as around 20 million Malaysians continue to use the Chinese social media app WeChat as a primary means of communication. The perception and effects of China-made surveillance infrastructure is further complicated by Malaysia’s status as a Muslim majority democracy that is also one of the largest receivers of Muslim refugee populations in Southeast Asia. The central problem this study will analyze is the tension in Kuala Lumpur between democratic political systems, Chinese technological implementation and marginalized refugee populations.

This research will build on my past work on Chinese surveillance systems in the Xinjiang region in Northwest China to consider the way illiberal policing systems change when placed in different political and social contexts. In Northwest China such infrastructure projects are framed as counter-terrorism systems that assessed and controlled Uyghurs and other Muslim populations. An integrated web of “safe city” projects is used in part to predict which Muslims are “pre-criminals” based on patterns in their daily behavior, their appearance, and data found on their devices (Byler 2019). Through my past research I obtained thousands of internal police files describing the surveillance systems of Chinese police departments in the Uyghur region. I will draw on my assessment of these files, and the goals and outcomes of the system in Xinjiang, to develop a comparative analytic to what I observe in Malaysia. My interview-based materials collected in refugee communities (including the diaspora Uyghur community), the broader Malay public, and with security technology workers in the city will serve as critical empirical data that will ground my analysis of the differences and similarities between the two cases.

This analysis, in turn, will provide a valuable lens through which to assess the role of AI-enabled surveillance infrastructure in the China model of development. In July 2017 the Xi administration elevated AI development to a nationwide strategic initiative, earmarking 300 billion dollars for investment in the industry by 2030. Some of the stated goals of this initiative were that Chinese industry and ideas become the “strongest voices in cyberspace,” surpassing the strength of western companies. The four largest Chinese firms connected to the Kuala Lumpur project, Alibaba, Sensetime, Yitu and Tencent, have all been designated by Chinese state authorities as possessing “core AI technologies” integral to the goal of establishing international technology standards and, in this case, extending the intelligence-gathering capacities of Chinese security systems. While in Xinjiang the systems built by these companies targeted minority Muslim citizen populations, my initial findings indicate that noncitizen Muslims are their primary target in Kuala Lumpur.

Ultimately this research will show how the design and implementation of AI-enabled surveillance systems affects the lives of people in ways that corollate with their ethno-racial, class, and citizenship position. As an ethnographic project it will seek to observe how infrastructural systems produce dispositions that channel behavior and, in a more aggregate sense, lifeworld temporalities (Oakes 2019). I anticipate that it will show that in some cases hidden forms of power emerge from relations between infrastructural elements, while in other cases they come from designed “scripts of action” (Bray 2007). While some in Malaysia may see digital and biometric surveillance systems as a source of security, the project will likely demonstrate that they also produce new forms of anti-refugee racialization. This is significant in that it will build on the work of Ruha Benjamin (2019) and others to show how surveillance systems can reproduce harmful racialized logics in new locations. Rather than seeking to prove that marginalized people are not surveilled well enough, as is sometimes argued in discussions of algorithmic bias, the project will consider how surveillance infrastructures create material forms and discursive logics which center on controlling and disciplining marginalized people. This case will show that a key concern in security infrastructures, broadly speaking, and the China model of security infrastructure development in particular, should be protections of a right to difference for marginalized people.

Works Cited

Benjamin, Ruha. (2019). Race after technology: Abolitionist tools for the new jim code. Polity Books.

Bray, Francesca. (2007). “Gender and technology.” Annual Review of Anthropology, 36,37-53.

Byler, Darren (2019). “Ghost World.” Logic, 7, 89-109.

Oakes, Tim. (2019). “China Made: Infrastructural Thinking in a Chinese Register.” Made in China Journal, 2, 66-71.

Byler, D. 2020. The digital enclosure of Turkic Muslims. Society & Space Magazine (December 7, 2020).

Byler, D. 2021. Chinese Infrastructures of Population Management on the New Silk Road. Wilson International Center for Scholars.